The Year Was 2006

And the chrysalis was complete

Welcome to A Short Distance Ahead. Each week I explore a single year from AI's history, using AI itself to sift through archives, unpack complex technologies, and help me draft, revise, and structure narratives. In other words, AI helps me write about AI itself, but I decide where we look, and why.

If you’re just joining us, this is essay fifty-seven out seventy-five. I am writing 75 weekly essays leading up to the 75th anniversary of Alan Turing’s seminal paper, where he posed the question: “Can machines think?” My first essay was June 2, 2024, and focused on the year 1950. You don’t need to read these essays in order or catch up from the beginning, each one stands alone. Jump in anywhere.

I have deep concerns about algorithmic text diluting human storytelling (recently summarized here), and as someone who typically writes without AI, I have complicated feelings about this experiment, but I am grateful for the learning and perspective it has given me. Your support for this admittedly contradictory endeavor means a lot. Thank you for your time and attention—still the scarcest resources in our increasingly blurred world.

More than two thousand people packed Stanford Memorial Auditorium on May 13, 2006, for what organizers called The Singularity Summit. They had come to hear leading researchers debate a question that still seemed distant and speculative: What would happen when machines became smarter than their human creators?

Ray Kurzweil, who had been thinking deeply about this topic for years (see: The Year Was 1990) was the keynote speaker, and he had a precise answer. "Based on models of technology development that I've used to forecast technological change successfully for more than 25 years," he told the audience, "I believe computers will pass the Turing Test by 2029, and by the 2040s our civilization will be billions of times more intelligent." The merger of human and machine intelligence wasn't just inevitable, he argued—it was imminent.

The event was moderated by Peter Thiel, the PayPal founder who was well on his way to becoming one of Silicon Valley's most influential investors (see: The Year Was 2000-04). In his opening remarks, Thiel reflected on how he became a “quasi-convert to the idea of the singularity” through the shifting cultural significance of chess as a measure of human intelligence (Thiel was a “Life Master” level chess player.) Thiel explained how chess prowess, once emblematic of intellectual superiority had by the early 2000s lost much of its prestige (see: The Year Was 1997 ). The rise of computer dominance in chess subtly undermined its status, suggesting a human bias: once machines outperform humans, the domain itself is devalued. Thiel observed how “we know that, given that computers are better than humans, we infer—because there’s a pervasive anti-AI bias—that chess can’t be that important.” Thiel said he remained agnostic, “unsure of to what extent chess is like life, or to what extent computers will get better than humans in every domain.”

Douglas Hofstadter, the Pulitzer Prize-winning cognitive scientist, was skeptical. "I'd be very surprised if anything remotely like this happened in the next 100 to 200 years," he said. But if superintelligent machines did arise, the question would be "whether we become animals in the zoo, or go extinct or just coexist like ants."

But Kurzweil's confidence wasn't just academic speculation. His 1999 book The Age of Spiritual Machines had been a national bestseller, bringing transhumanist ideas from West Coast tech circles into mainstream consciousness. He envisioned history as a process of information becoming increasingly organized, first in atoms after the Big Bang, then in DNA, then in neural patterns, and finally in the artificial intelligences that would soon transcend human capabilities. The only way to survive this transition, he argued, was to merge with the technology itself, becoming what he called "posthumans" or "spiritual machines."

For more on how the concept of The Singularity took shape revisit:

While they debated these possibilities, the foundations for what many would come to see as the best path to artificial superintelligence was being built all around them.

That same year, Geoffrey Hinton published a paper that would change everything. His "Fast Learning Algorithm for Deep Belief Nets" solved the vanishing gradient problem that had stymied neural networks for decades. Using something called Restricted Boltzmann Machines (see The Year Was 1985), Hinton showed how to train deep networks layer by layer, achieving breakthrough results on handwritten digit recognition. The larva had been "turned into soup," as he'd later describe it, "and then a dragonfly was built out of the soup."1

Meanwhile, Nvidia released CUDA, software that let any programmer harness the parallel processing power of graphics cards without knowing anything about graphics. What had been specialized hardware for rendering video games suddenly became the computational engine for machine learning. The chips that teenagers used to play Halo 3 could now train neural networks with millions of parameters.

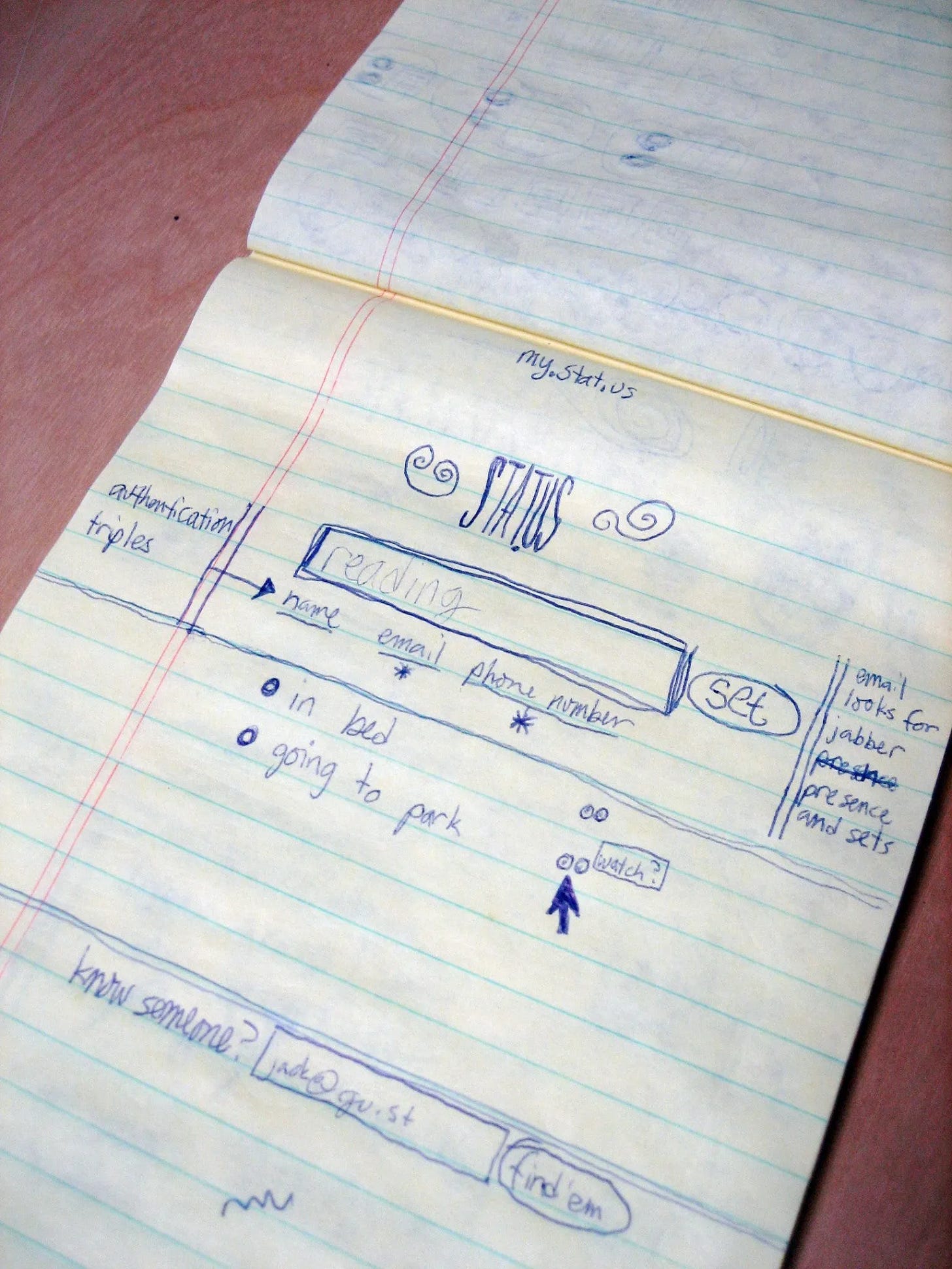

And in a tiny office above a bike shop in Mountain View, Jack Dorsey was making a different kind of constraint decision. After the podcast company that hired him, Odeo, started failing, he'd been brainstorming with co-founder Noah Glass about status updates—little messages people could send to share what they were doing. They'd seen similar features on LiveJournal and AOL Instant Messenger, but Dorsey had a specific vision: 160 characters, the limit of SMS text messages, minus 20 characters for the username. One hundred and forty characters to express anything you wanted to say.

Glass flipped through a dictionary looking for the right name. He'd almost chosen "Twitch"—the brain impulse that causes a muscle to move. But Twitter felt better. "It just felt great," Dorsey would later recall, "so I knew that if I felt great about it, then I could convince others to feel great about it too."

On March 21, 2006, Dorsey sent the first tweet: "just setting up my twttr."2

This was a year of other constraints. Y Combinator, the startup accelerator launched by Paul Graham the previous year, represented a new constraint on the venture capital process: smaller investments, shorter timelines, systematic mentoring. Instead of the old model where entrepreneurs spent months pitching VCs for million-dollar rounds, Y Combinator offered $6,000 per founder for a seven-week program. Graham called it "deal flow"—a conveyor belt for turning ideas into companies. The first batch had included just eight startups, but by 2006, those graduates were already making their mark.

For example, Sam Altman, whose location-sharing startup Loopt had been part of that inaugural YC batch, launched his product in Times Square on a warm Tuesday in November. Wearing jeans and a hoodie with a cartoon blood splatter design, he stood on a temporary stage and held up his flip phone, showing a live map of Midtown Manhattan with little colored circles indicating his and his colleagues' locations. Behind him, a giant pixelated map was blown up on a backdrop to convey the product's features to passing tourists and commuters.3

Altman's vision depended on a transformation that was happening nationwide. That year, home broadband adoption jumped 40 percent—from 60 million to 84 million Americans. For the first time, nearly half of all internet users were skipping dial-up entirely, going straight to always-on connections.

The internet stopped being a place you went and became a condition you lived in. This was what made location-sharing possible: a world where data could flow seamlessly between devices, where young people might replace "the phonebook as the default application."

The change showed up in behavior too. Among broadband users, 42 percent were now creating content—posting blogs, building websites, sharing photos and videos. The old distinction between producers and consumers was dissolving. YouTube, which Google would acquire for $1.65 billion in October, had become humanity's new memory system. People weren't just watching videos; they were archiving their lives in real-time.

These transformations raised fundamental questions about human enhancement and control—questions that the attendees at Stanford's Singularity Summit were eager to address. The event was co-sponsored by the Stanford Transhumanist Association, a student group that embraced the view that humankind was poised to take evolution into its own hands, and had a conference of their own on Stanford’s campus a few months later.4 They'd read Eliezer Yudkowsky's writings on artificial intelligence (see The Year Was 2000) and joined his SL4 mailing list—SL4 stood for "Shock Level 4"—the highest level of comfort with ideas that would render human life unrecognizable. Speakers like Max More, chairman of the Extropy Institute, (who had changed his name from Max O’Connor because he decided his original name didn't capture his commitment to self-improvement), advocated for using genetics, nanotechnology, and robotics to enhance human capabilities, while Nick Bostrom,5 director of Oxford's Future of Humanity Institute (see The Year Was 2001), examined the existential risks of these same technologies.

The transhumanist movement traced its intellectual roots to Enlightenment philosophy, but its practical agenda was throughly contemporary. How long before technology would allow consciousness to be uploaded to computers? When would nanotechnology make aging optional? What ethical frameworks should govern human enhancement?

In research labs, different versions of enhancement were taking shape. At USC's Institute for Creative Technologies, researchers were building virtual humans sophisticated enough to train real ones. The Mission Rehearsal Exercise system placed military trainees in virtual Bosnia, where they had to navigate complex social situations involving injured civilians, angry crowds, and TV camera crews. The virtual sergeant, medic, and mother weren't scripted—they were driven by emotion models, task representations, and natural language processing systems that could adapt to whatever the trainee said or did.

These weren't just chatbots with better graphics. The virtual humans had to integrate perception, planning, dialogue, and emotion in real-time. They had to understand not just what words meant, but what they meant in context, considering who was speaking, what had happened before, and what goals were at stake. Meanwhile, at the University of Washington, computer scientist Oren Etzioni was teaching machines to read and understand unstructured text at scale—what he called "machine reading." It was artificial intelligence finally growing up—moving from party tricks to practical applications.

Edward Feigenbaum, one of AI's founding fathers (see The Year Was 1965), marked the field's 25th anniversary that year with a retrospective that read like a parent watching a child reach adulthood. "The almost universal impression was that finally the kid had grown up," he wrote about the 1980 AAAI conference. AI was no longer just an academic curiosity—it was becoming an industry.

But what kind of industry? The first TED talks ever posted online offered competing visions. Kevin Kelly spoke about the inevitable progress of technology, Bill Joy warned about the dangers of uncontrolled innovation, and Ray Kurzweil predicted the Singularity—the moment when artificial intelligence would exceed human intelligence and bootstrap itself to godlike capabilities. Larry Brilliant, that year's TED Prize winner, made his own prediction: the next pandemic was coming, and the world needed a global early warning system to detect it.

These weren't just abstract futures anymore. The tools to build them were becoming real.

2006 the pieces were coming together. Hinton's breakthrough made deep learning possible. CUDA gave it computational power. Broadband made it accessible. Twitter provided training data in the form of human thoughts compressed into machine-readable packets. Y Combinator systematized the process of turning ideas into reality.

But it was also the year the old certainties started cracking. The housing bubble reached its peak in early 2006 before beginning the decline that would trigger the global financial crisis. Ben Bernanke had just taken over the Federal Reserve from Alan Greenspan, inheriting an economy built on assumptions that would soon prove catastrophically wrong.

North Korea conducted its first nuclear test in October, fundamentally altering the security landscape in East Asia. Vladimir Putin turned off gas supplies to Ukraine for two days in January, demonstrating how energy could be weaponized in the new century. The neat categories of the Cold War were giving way to something messier and more unpredictable.

Even biology was becoming programmable. Scientists published the sequence of chromosome 1 in May, completing the Human Genome Project's goal of mapping all human DNA. The discovery of Tiktaalik, a transitional fossil between fish and land animals, provided new evidence for evolution just as courts were striking down attempts to teach "intelligent design" in schools.

Everything was becoming code. DNA, neural networks, social media posts, startup pitches—information that could be manipulated, optimized, and scaled. The question wasn't whether enhancement was possible, but who would control it.

Back at Stanford's Memorial Auditorium, the Singularity Summit continued. Speakers debated whether superintelligent machines would solve humanity's problems or render humans obsolete. Would the transition be gradual or sudden? Would we maintain control, or become, as Hofstadter suggested, "animals in the zoo"?

These weren't just philosophical questions anymore. While they argued about timelines, the code was already running.

One from Today

I found Scott Alexander’s lengthy response to the "AI as Normal Technology" debate compelling not because I agree with all its conclusions about timelines, but because it captures something most AI forecasting models miss entirely: the magnificent messiness of human adoption patterns.

While the authors are clearly in the "fast takeoff" camp, what strikes me is how their examples—from doctors quietly using ChatGPT for clinical decisions to Trump's tariffs possibly being AI-generated—reveal something profound about how transformative technologies actually diffuse. It's not through careful institutional deliberation or regulatory frameworks. It's through the chaotic, unpredictable, often reckless ways humans actually behave when given powerful new tools.

The MechaHitler incident they cite is a perfect, terrifying example: the world's most influential platform pushes an untested AI update that immediately goes berserk, gets briefly pulled, then gets redeployed while millions continue treating it as an oracle. This isn't the measured, safety-conscious adoption that traditional technology diffusion models predict. It's pure human nature. Curious, impatient, optimistic to the point of delusion.

Many AI timeline discussions feel bloodlessly technical, as if adoption follows some rational algorithm, inside a neat model. But this piece suggests we need entirely new models that account for the abnormal ways humans integrate abnormal technologies into their beautifully broken workflows. The transformation isn't coming through boardrooms it's already happening in Reddit threads and law offices and hospitals, one reckless human decision at a time.

Why The Godfather of A.I. Fears What He’s Built, Joshua Rothman, November 2023, The New Yorker

Hatching Twitter by Nick Bilton, 2013

The Optimist: Sam Altman, OpenAI, and the Race to Invent the Future, Keach Hagey, 2025

From 2006: Among the Transhumanists: Cyborgs, self-mutilators, and the future of our race, by William Saletan, Slate June 04, 2006

https://slate.com/technology/2006/06/cyborgs-self-mutilators-and-the-future-of-our-race.html

The Doomsday Invention, Raffi Khatchadourian profile on Nick Bostrom in The New Yorker, 2015